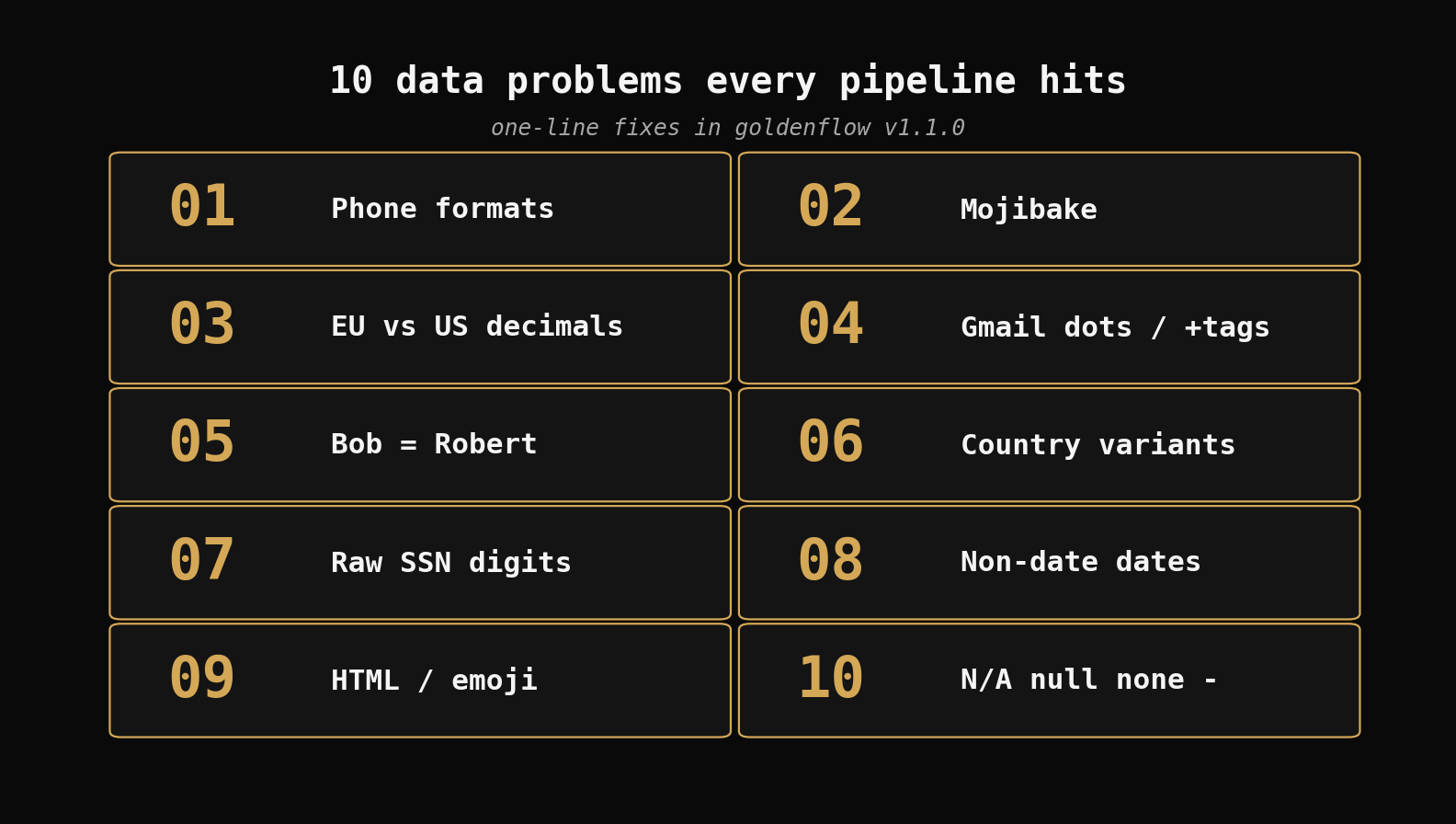

10 Data Problems Every Pipeline Hits and Their Fixes

The same 10 data quality issues show up in every dataset. Here's what they look like and how to fix each in one line.

Every data engineer writes throwaway scripts to fix the same problems. Phone numbers in 15 formats. Dates that aren't dates. "N/A" and "null" and "" all meaning the same thing. The scripts are always slightly different, never reusable, and break when the data changes.

Here are the 10 problems we see in every dataset, what they actually look like, and how to fix each one.

1. Phone numbers in 15 formats

Your CRM has phone numbers entered by humans over a decade. No two look the same.

| Before | After |

|---|---|

| (555) 123-4567 | +15551234567 |

| 555.123.4567 | +15551234567 |

| +1-555-123-4567 | +15551234567 |

| 5551234567 | +15551234567 |

| 1 (555) 123-4567 | +15551234567 |

goldenflow transform contacts.csv

Zero-config mode detects phone columns and normalizes to E.164 automatically. Every downstream system — Twilio, Salesforce, your matching pipeline — expects E.164. Do it once at the source.

2. Mojibake from bad CSV exports

Someone exported from Excel on a Windows machine, someone else opened it on a Mac, and now your text looks like this:

| Before | After |

|---|---|

| café | cafe |

| it’s | it's |

| résumé | resume |

| naïve | naive |

This happens when UTF-8 bytes get decoded as Latin-1. The fix is to re-encode:

import goldenflow

from goldenflow import GoldenFlowConfig, TransformSpec

config = GoldenFlowConfig(transforms=[

TransformSpec(column="description", ops=["fix_mojibake"]),

])

result = goldenflow.transform_df(df, config=config)

fix_mojibake tries the Latin-1 → UTF-8 round-trip. If the text wasn't actually garbled, it returns the original unchanged.

3. European vs US decimal formats

You're merging data from a German office and a US office. One writes 1.234,56 (one thousand two hundred thirty-four and 56 cents). The other writes 1,234.56. Same number, incompatible formats.

| Before (European) | After |

|---|---|

| 1.234,56 | 1234.56 |

| 99,99 | 99.99 |

| 10.000,00 | 10000.0 |

| 3.14 | 3.14 |

comma_decimal is smart about this — if there's no comma in the value, it parses as-is (so 3.14 stays 3.14, not 314). It only applies European conversion when a comma is actually present.

4. Gmail dots and +tags hiding duplicates

Gmail ignores dots in the local part and everything after a +. These are all the same inbox:

| Before | After |

|---|---|

| john.doe@gmail.com | johndoe@gmail.com |

| j.o.h.n.d.o.e@gmail.com | johndoe@gmail.com |

| johndoe+spam@gmail.com | johndoe@gmail.com |

| JohnDoe@Gmail.COM | johndoe@gmail.com |

Your dedup pipeline sees four different emails. Your customer sees one inbox and four of the same marketing email.

config = GoldenFlowConfig(transforms=[

TransformSpec(column="email", ops=["email_normalize"]),

])

This only strips dots for Gmail/Googlemail domains — other providers treat dots as significant.

5. "Bob" and "Robert" are the same person

You're matching records across systems. One has "Bob Smith" and the other has "Robert Smith". Fuzzy matching gives you a 60% score — not enough to auto-match, too high to ignore. You end up manually reviewing thousands of maybe-matches.

| Before | After |

|---|---|

| Bob | Robert |

| Bill | William |

| Jim | James |

| Mike | Michael |

| Dick | Richard |

| Liz | Elizabeth |

| Peggy | Margaret |

nickname_standardize maps 50+ common English nicknames to their formal equivalents. Run it before dedup and that 60% match becomes 100%. This was built specifically to improve GoldenMatch results — normalizing nicknames before blocking and scoring eliminates an entire class of false negatives.

6. Country names in 6 variants

Your international dataset has country names entered by humans in different languages, abbreviations, and conventions:

| Before | After |

|---|---|

| United States | US |

| United States of America | US |

| USA | US |

| United Kingdom | GB |

| Great Britain | GB |

| Deutschland | DE |

country_standardize maps common country names, abbreviations, and local names to ISO 3166-1 alpha-2 codes. Unknown values pass through unchanged — you can spot them by filtering for values longer than 2 characters.

7. SSNs stored as raw digits

Someone dumped SSNs into a spreadsheet without formatting. Now they're sitting in a column as 123456789 — no dashes, no masking, ready to be accidentally emailed to the wrong person.

| Transform | Before | After |

|---|---|---|

ssn_format | 123456789 | 123-45-6789 |

ssn_mask | 123456789 | ***-**-6789 |

ssn_mask | 123-45-6789 | ***-**-6789 |

goldenflow transform employees.csv -c config.yaml

# config.yaml:

# transforms:

# - column: ssn

# ops: [ssn_mask]

ssn_format normalizes to the standard dashed format. ssn_mask redacts everything except the last four digits. Both handle raw digits, dashed formats, and spaced formats. Invalid SSNs (wrong digit count) are preserved as-is so you can find and fix them.

8. Dates that aren't dates

Your date column has actual dates, free text someone typed into a date field, and blanks masquerading as dates:

| Before | date_validate | date_iso8601 |

|---|---|---|

| 2024-03-15 | true | 2024-03-15 |

| March 15, 2024 | true | 2024-03-15 |

| 03/15/2024 | true | 2024-03-15 |

| not a date | false | not a date |

| TBD | false | TBD |

Run date_validate first to flag the garbage, then date_iso8601 to normalize the real dates. The invalid values pass through unchanged — they don't silently become null or today's date.

9. HTML tags and emoji in scraped data

You scraped product descriptions or customer reviews. The raw data is full of markup and emoji that will break your NLP pipeline:

| Before | After |

|---|---|

| <p>Great product!</p> | Great product! |

| <b>Bold</b> claim | Bold claim |

| Love this! \U0001f600\U0001f44d | Love this! |

| Check <a href="...">here</a> | Check here |

remove_html_tags strips all HTML tags. remove_emojis strips emoji characters. Chain them: ops: ["remove_html_tags", "remove_emojis", "collapse_whitespace"].

10. "N/A", "null", "none", "-", ""

The classic. Five different ways to say "this field is empty" and none of them are actual nulls. Your aggregations count them as values. Your joins fail silently.

| Before | After |

|---|---|

| N/A | null |

| null | null |

| none | null |

| NA | null |

| - | null |

| (empty string) | null |

import goldenflow

# Zero-config handles this automatically

result = goldenflow.transform_df(df)

null_standardize is one of the auto-apply transforms — zero-config mode runs it on every string column without you asking. It catches the variants that trip up every pipeline.

All of this is in GoldenFlow v1.1.0

pip install goldenflow

goldenflow transform your_data.csv

v1.1.0 ships 76 transforms across 11 categories — including the email, identifier, and URL modules that are new in this release. If you're already using GoldenCheck for data profiling, the --from-findings flag now actually works (it was silently broken in v1.0.0 — the finding-to-transform mapping used the wrong check names).

Full release notes: v1.1.0 on GitHub

Related posts

→ From Dirty CSV to Golden Records: A Python Walkthrough

Take 5,400 messy hospital records from raw CSV to deduplicated golden records — zero-config, then explicit tuning, then LLM boost.

2026-04-06

→ AI-Powered Deduplication: LLMs Supercharge Golden Suite

Enable LLM boost across GoldenCheck, GoldenFlow, and GoldenMatch to catch what fuzzy matching misses — with real costs under $0.10.

2026-03-31

→ Product Catalog Dedup on a Real 1M-Row Dataset: F1 0.05 → 0.36 in Three Steps

Running the full Golden Suite (GoldenCheck → GoldenFlow → GoldenMatch) on the UCI Online Retail II catalog. Real, unsynthetic duplicates. Honest numbers — and how fixing the eval, switching to Vertex AI embeddings, and tuning the threshold lifted F1 7× from a hopeless lexical baseline.

2026-04-11